畳み込みニューラルネットワーク(CNN)の学習には、長い時間そして膨大な計算リソースとエネルギーが必要です。CNNの学習時間を短縮するためには、予算とエネルギーを節約するためにも、学習時間の正確な予測が必要です。しかし、CNNの学習時間は、ハードウェアの性能、ソフトウェア環境、学習パラメータ、そしてCNNのアーキテクチャといった多くの要素により影響を受けます。これらの要素は学習時間に予想外の影響を与える可能性があるため、正確な CNN 学習時間の予測は極めて困難です。

私たちは、任意のNVIDIA GPU上で一定の要件を満たす任意のCNNの学習時間を正確に予測する方法を開発しました。NVIDIAのGPUは、CNNの学習を加速するためによく使用されます。私たちはこの方法を自由に利用できるウェブアプリケーションとして実装しました。

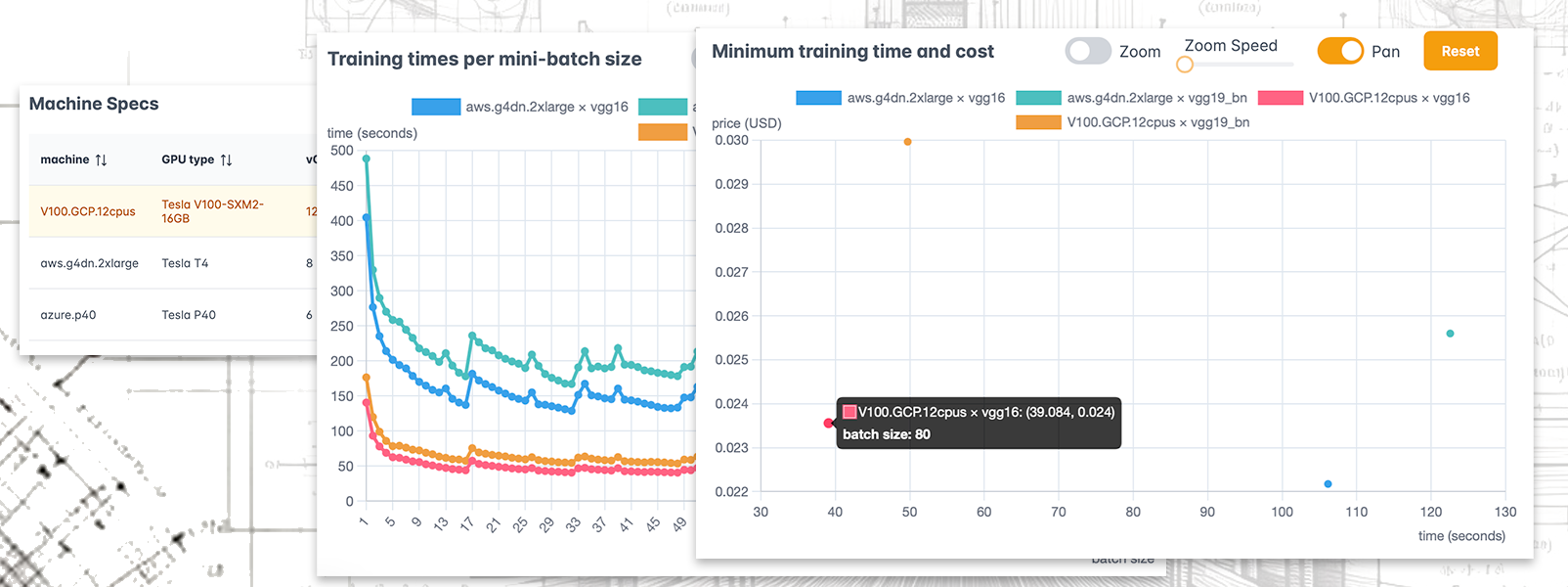

このアプリケーションでは、31のCNNアーキテクチャと主要クラウドプロバイダーの3種類のGPUマシンに対して学習時間を予測することができます。今後、利用可能なCNNとマシンタイプの数は拡張する予定です。 選択したCNNとマシンの組み合わせごとに、アプリケーションは広いミニバッチサイズの範囲で学習時間を予測し、最小予測学習時間とコストを表示します。

ウェブアプリケーションの使い方は次の通りです。まずはプルダウンリストからCNNアーキテクチャとマシンインスタンスを選択し、学習エポック数と学習データサイズを設定します。選択できる学習データは、224×224ピクセルに切り取られたカラー画像で、一般的なImagenetの学習に対応しています。CNNの学習に使用できる最適化アルゴリズムは現在は1種類のみです。

検索ボタンをクリックすると、数秒後に2つのプロットが表示されます。右側のプロットは、選択されたCNNとマシンごとに、ミニバッチサイズの関数としての予測学習時間を表示します。左側のプロットは、各CNNとマシンの組み合わせに対する最小の学習時間(x軸)とコスト(y軸)に対応する点を表示します。ポイントにカーソルを合わせると、その最小値が達成されるミニバッチサイズも表示されます。

プロットの下には、マシンの仕様表が表示されます。

CNNの学習におけるミニバッチサイズの選択に正確な予測を使うことで、学習エポックごとに数秒節約することが可能になります!数百または数千のエポックを学習する場合、その節約は大きくなります!我々のアプリケーションは、学習時間だけでなく、必要なコストやエネルギー消費も節約するのに役立つことが期待できます。

学習時間の予測精度は高く、我々の実験では、一つのマシン上での全ての実験を通じて平均化した誤差(MAPE)はわずか4-6%でした。

我々のCNN学習時間予測の手法は、畳み込み層のベンチマークと機械学習技術を組み合わせたものです。CNNの学習時間を予測するには、そのすべての畳み込み層のベンチマークが必要です。

多くのCNNが共通の畳み込み層を持っていますが、新しいCNNアーキテクチャの予測には、まだ私たちのデータベースにない新しい畳み込み層のベンチマークが必要になる場合があります。1つのクラウドプロバイダ(AWS)では、予測時にオンラインベンチマークを実装しました。他のクラウドプロバイダでは、この機能はまだ利用できないため、一部のCNNアーキテクチャの予測ができない可能性があります。

予測は特定の学習環境を対象としています:CNNの学習はPyTorch 1.9.1、CUDA 11.1、およびcuDNN 8.0.5を使用して行われ、学習に使用される最適化アルゴリズムは、momentumとweight_decayパラメータが0に設定されたSGDです。

私たちはこのCNN学習時間予測アプローチについて、研究論文を執筆しました。この論文は間もなく一般に公開される予定ですが、公開される次第、こちらに論文へのリンクを追加します。

https://cnntimecost.stair.center でCNN学習時間予測アプリケーションをお試しください。ご質問やフィードバックがありましたら、ぜひお知らせください。

Training a convolutional neural network (CNN) requires much time, expansive computational resources, and energy. To reduce CNN training time, budget and energy, accurate training time predictions are necessary. However, CNN training time is influenced by many factors, including hardware performance, software environment, training parameters and CNN architecture. These factors may affect training time in unexpected ways, making accurate CNN training time predictions extremely challenging.

We have developed a method to accurately predict the training time for any CNN satisfying certain requirements on any NVIDIA GPU, often used to accelerate CNN training. We have implemented this method as a freely available web application.

The application allows predicting training times for 31 CNN architectures and 3 GPU types across machine instances from three major cloud providers, and the number of available CNNs and machine types will be expanded in the future. For each selected combination of CNN and machine, the application predicts training time over a range of mini-batch sizes and shows the minimum predicted training time and cost.

Using the web application is simple: you select the CNN architectures and machine instances you are interested in from the dropdown lists and set the number of training epochs and the training data size. The selectable training data is limited to color images that are cropped to 224×224 pixels, typical for Imagenet training. Currently, only one optimizer for training the CNNs can be selected.

You click the Search button, and shortly after, two plots appear. The plot on the right shows the predicted training time as a function of the mini-batch size for each selected CNN and machine. The plot on the left shows points corresponding to the minimum training time (x-axis) and cost (y-axis) for each CNN and machine combination. When hovering over the points, it also displays the mini-batch size at which those minimum values are achieved.

A table with the machines’ specifications is presented below the plots.

Using accurate predictions to select the mini-batch size for CNN training can save up to several seconds per training epoch! And what would the savings be over hundreds or thousands of training epochs! Thus, our application can help you save on training time, as well as the required cost and energy consumption!

The prediction accuracy is high – our experiments showed errors (MAPE) averaged across all experiments on one machine of just 4-6%.

Our approach to predicting CNN training times combines convolutional layer benchmarking and machine learning techniques. Predicting the training time of a CNN requires benchmarks of all its convolutional layers.

While many CNNs have common convolutional layer configurations, predicting new CNN architectures may require benchmarking new convolutional layers that are not yet in our database. For one cloud provider (AWS), we have implemented online benchmarking during prediction. For other cloud providers, this capability is not yet available, so predictions may not work for some CNN architectures.

The predictions are targeted for a particular training environment: the CNN training is performed using PyTorch 1.9.1 with CUDA 11.1 and cuDNN 8.0.5, and the optimizer used for training is SGD with momentum and weight_decay parameters set to 0.

We have written a research paper detailing our CNN training time prediction approach. It will be publicly available soon. We will add a link to the paper here.

Try out the web application at https://cnntimecost.stair.center to predict CNN training times yourself! Let us know if you have any questions or feedback.